Amazon Trainium chips are set to revolutionize the landscape of artificial intelligence by providing a powerful and efficient solution for AI model training. Developed under the direction of Annapurna Labs as part of Amazon Web Services (AWS), these cutting-edge chips, exemplified by the latest Trainium 2 model, aim to challenge Nvidia’s historical dominance in the AI hardware market. With Project Rainier, Amazon is investing heavily in creating one of the world’s largest data center clusters, designed specifically for AI applications. This strategic shift not only enhances AWS’s capabilities but also opens the door to advanced computational power that could lead to significant breakthroughs in AI research and development. As demand skyrockets for robust AI chips, Amazon Trainium chips are positioned to play a central role in meeting that need and driving innovation across industries.

The Amazon Trainium chips represent a significant advancement in the field of AI semiconductor technology, paving the way for enhanced machine learning capabilities. These advanced processors, created by Amazon’s Annapurna Labs division within AWS, mark a strategic initiative to compete by leveraging in-house chip design for artificial intelligence applications. By establishing Project Rainier, AWS is not only building immense server infrastructure but also strategically positioning itself as a leader in the rapidly evolving artificial intelligence landscape. This move aligns with a broader trend among tech giants to reduce reliance on external suppliers and develop proprietary solutions that propel their AI strategies. As various companies vie for dominance in AI, the impact of Trainium chips on cloud computing and AI advancements will be profound.

Understanding Amazon’s Ambition: The Trainium Chips Revolution

Amazon’s latest innovation, the Trainium chips, represents a significant leap in the realm of artificial intelligence processing. With expectations of performance improvements rather than existing NVIDIA counterparts, Trainium chips are designed specifically for machine learning tasks, aligning with Amazon’s long-term vision for its cloud service offerings through Amazon Web Services (AWS). The introduction of the Trainium project not only seeks to reduce costs associated with AI workloads but aims to redefine the standards for computational power in AI applications. By harnessing the capabilities of these chips, Amazon is positioning itself as a fierce competitor in the ever-evolving AI landscape.

The development of Trainium chips is also nuanced with collaboration, notably with companies like Anthropic. This partnership is pivotal as it allows Amazon to receive direct feedback on chip performance and adjustments needed for future iterations. The insights gained from effective engagements with AI companies enable Amazon to enhance its chip designs dynamically. As seen with the projected performance of Trainium 3, set to be released soon, advancements in chip efficiency directly translate to superior AI model outputs, fundamentally reshaping how machine learning tasks are executed.

Project Rainier: A New Era in Cloud Computing

At the heart of Amazon’s initiatives lies Project Rainier, which aims to establish one of the largest AI compute clusters globally, built upon the Trainium chips. This immense infrastructure growth responds to the escalating competitive pressures from other tech giants such as Microsoft, Google, and Meta. By investing approximately $100 billion in various AWS facilities, Amazon is not merely chasing competitors but paving the way for a monumental shift in cloud computing capabilities. The scale of Project Rainier reflects a strategic vision that prioritizes the creation of an advanced AI-ready infrastructure capable of supporting next-generation AI models.

Moreover, the investment emphasizes a shift from traditional cloud computing models to a hyperscaler approach, allowing companies to exploit the robust AI capabilities needed in a data-driven world. As Project Rainier evolves, it is expected to house hundreds of thousands of Trainium chips, laying the groundwork for innovations that could lead to Artificial General Intelligence (AGI). This monumental project showcases how the interplay between enhanced chip technology and large-scale infrastructure can redefine the future trajectory of AI development and deployment.

The Synergy Between Anthropic and Amazon

Anthropic’s partnership with Amazon offers a fascinating glimpse into the collaborative dynamics shaping the AI development landscape. Holding a minority stake in Anthropic and investing heavily into the company, Amazon is effectively utilizing this relationship to test and refine its AWS cloud services under real-world, resource-intensive conditions. This strategic alliance mirrors that of Microsoft with OpenAI, emphasizing the trend of tech giants aligning closely with promising startups to harness innovative technologies while solidifying their position within the AI market.

The contributions of Anthropic towards optimizing the Trainium chips demonstrate the importance of inter-company collaboration in achieving breakthroughs. Anthropic’s direct engagement with AWS has allowed Amazon to tailor the chips to better suit AI tasks, ultimately leading to enhanced performance and efficiency. As both companies continue to innovate, their partnership not only drives Amazon’s chip development but also represents a self-reinforcing cycle where the cloud service provider enhances AI capabilities while capturing a growing market of AI developers and researchers.

Amazon Web Services: Redefining Cloud Capabilities with AI Solutions

Amazon Web Services (AWS) stands as a vital pillar of Amazon’s tech empire, particularly in the area of cloud computing. Through AI-specific innovations like the Trainium chips, AWS is redefining what cloud infrastructure can achieve for businesses seeking to implement machine learning solutions. The necessity for high-performance chips tailored for AI applications has created an influx of enterprises transitioning towards customized solutions, establishing AWS as a go-to platform for future AI developments. The substantial financial backing being funneled into AWS emphasizes Amazon’s commitment to remaining ahead in the rapidly evolving cloud market.

Furthermore, AWS’s adoption of in-house chip designs signifies a move towards autonomy, reducing reliance on external chip manufacturers like NVIDIA. This strategic pivot empowers AWS to offer competitive pricing and scalable solutions that can cater to diverse business needs, significantly lowering the barrier for enterprises looking to adopt AI capabilities. The resulting ecosystem not only enhances AWS’s market share but also positions them as a leader in providing cutting-edge cloud computing solutions characterized by encompassing AI functionalities.

The Role of Annapurna Labs in Amazon’s Chip Development

Annapurna Labs, the semiconductor design division within Amazon, plays a crucial role in the development and optimization of the Trainium chips. Led by Rami Sinno, this unit epitomizes Amazon’s strategy of leveraging specialized knowledge to innovate in complex technology fields. With a focused agenda on AI chip design, Annapurna’s engineers are not only tasked with creating the chips but are also involved in iterating on feedback from users like Anthropic, which directly influences chip capabilities and performance enhancements.

The collaborative efforts within Annapurna Labs streamline the design process, allowing for rapid prototyping and testing of advancements such as the anticipated Trainium 3. As these engineers continue to adapt and refine their designs based on real-world applications, the capabilities of Amazon’s chips will progressively surpass existing benchmarks within the industry, potentially reshaping how AI systems are built and deployed globally. This forward-thinking approach reaffirms Amazon’s commitment to being an industry leader amidst intense competition.

Competitive Landscape: Amazon vs. Other Tech Giants

As competition intensifies within the AI realm, Amazon’s push with Trainium chips and Project Rainier establishes a direct challenge to tech giants like NVIDIA, Google, and Microsoft. The industry’s focus on building proprietary chips to support advanced AI models illustrates a larger trend toward customization and efficiency. Although companies like NVIDIA have dominated the market, the shift towards in-house chip development signifies a potential paradigm shift as competitors attempt to assert their independence from external suppliers while driving innovation at a quicker pace.

Furthermore, the integration of proprietary chips like Amazon’s Trainium into vast data clusters signals a move towards highly specialized environments tailored for complex AI tasks. Companies are investing heavily to create these hyperscaler environments, ensuring that they can meet the demands of future AI developments. With Amazon’s ambitions to build one of the largest AI compute clusters as a response to initiatives announced by Microsoft and OpenAI, the competitive landscape is only set to grow more intense, forcing all players to innovate at unprecedented rates.

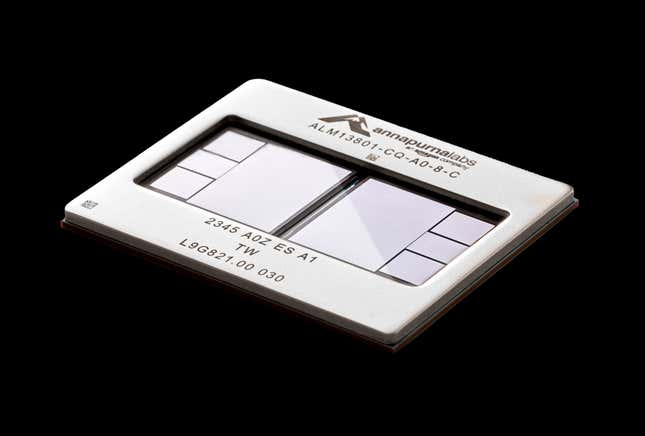

Exploring the Advanced Features of Trainium 2

The Trainium 2 chip stands at the forefront of Amazon’s AI endeavors, embodying advancements designed specifically for machine learning applications. Structured around a high-density layout with billions of transistors embedded in its design, the Trainium 2 boasts efficiencies that set it apart from other offerings in the marketplace. The chip’s capacity to drive down operational costs for training complex models makes it an attractive proposition for companies looking to deploy AI in scalable environments, positioning it as a tailored solution for modern computational demands.

In contrast to conventional chip designs that rely heavily on versatility, Trainium 2 chips prioritize performance in AI-focused tasks. This alignment with specific requirements enhances the overall efficiency for high-throughput workloads, marking a key difference from generic solutions available. This targeted functionality not only provides Amazon a competitive edge in the AI race but also contributes toward making AI capabilities more accessible and affordable for companies of all sizes looking to integrate advanced technologies into their operations.

Future Innovations: Anticipating Trainium 3 and Beyond

With Trainium 3 on the horizon, Amazon is poised to further elevate its chip technology, promising to double the speeds of its predecessor while also enhancing energy efficiency by 40%. Such advancements could redefine the computational landscape by allowing AI models to train faster and achieve higher performance with less energy consumption. This continuous evolutionary cycle exemplifies how the AI industry requires constant innovation to keep pace with burgeoning demand and competitive pressure, making the upcoming Trainium 3 a pivotal player in this fast-paced environment.

Moreover, the research and development processes for Trainium chips utilize advanced neural networks that analyze performance data to drive the design of future generations. This integration of AI within chip development not only accelerates the innovation timeline but also taps into sophisticated feedback mechanisms that enhance chip capabilities iteratively. By maintaining a cycle where AI influences chip design, Amazon is constructing an ecosystem that propels its technology deeper into the AI landscape, paving the way for potentially groundbreaking developments in the years to come.

Frequently Asked Questions

What are Amazon Trainium chips and how do they relate to AI computing?

Amazon Trainium chips are custom-designed AI chips developed by Amazon Web Services (AWS) to enhance the training and performance of artificial intelligence models. Specifically, the latest iteration, Trainium 2, aims to provide substantial computational power tailored for AI workloads, allowing companies like Anthropic to train complex AI models more efficiently.

How does Project Rainier leverage Amazon Trainium chips?

Project Rainier is an ambitious initiative by Amazon that utilizes thousands of Amazon Trainium chips in one of the largest data center clusters ever built. This project aims to provide significant computational resources for training advanced AI models, including those developed by Anthropic, thus enhancing AWS’s capabilities in the AI domain.

What role does Annapurna Labs play in the development of Amazon Trainium chips?

Annapurna Labs is the chip design unit of Amazon’s AWS, responsible for creating the Trainium chips. The engineering team, led by Rami Sinno, collaborates closely with AI companies like Anthropic to ensure that the chips meet the specific needs of modern AI workloads, fostering innovation in AI hardware.

How do Amazon Trainium chips compare to Nvidia’s offerings?

Amazon Trainium chips are specifically designed for AI workloads, offering a custom alternative to Nvidia’s GPUs. While Nvidia remains a leading supplier of AI chips, Amazon’s focus on in-house chip development through Trainium is aimed at reducing dependence on Nvidia and optimizing performance for AWS clients.

Will Amazon continue to develop the Trainium chip series beyond Trainium 2?

Yes, Amazon is actively working on the next generation of its chips, including Trainium 3, which is set to be released later this year. This chip is expected to be twice as fast and 40% more energy efficient than its predecessor, illustrating Amazon’s commitment to continuous innovation in AI computing.

How does Anthropic benefit from using Amazon’s Trainium chips?

Anthropic benefits from Amazon’s Trainium chips by gaining access to enhanced computational power specifically designed for AI training. This relationship allows Anthropic to optimize its models on AWS infrastructure, potentially achieving significant performance improvements for its AI systems.

What makes Project Rainier a significant milestone for Amazon Web Services?

Project Rainier marks a significant milestone for AWS as it aims to build the world’s largest AI compute cluster using Amazon Trainium chips. This initiative not only positions Amazon as a competitor in the AI landscape but also highlights its investment in advanced infrastructure to support the growing demand for AI capabilities.

How do the Trainium chips support the development of Artificial General Intelligence (AGI)?

The computational capabilities of Amazon’s Trainium chips are expected to contribute significantly to the development of AI models that could approach Artificial General Intelligence (AGI). By providing the necessary power to train complex models more effectively, these chips could play a crucial role in realizing advanced AI systems capable of outperforming human experts in various fields.

| Key Point | Details |

|---|---|

| Introduction of Amazon Trainium 2 Chips | Amazon unveiled the Trainium 2 chip, designed for AI, featuring billions of electrical switches on a silicon wafer. |

| Need for In-House Chips | Growing competition drives Amazon and other tech giants to create their own chips due to reliance on Nvidia’s monopoly in AI chip production. |

| Project Rainier | Aiming to establish one of the world’s largest AI compute clusters using thousands of Trainium 2 chips for AI training. |

| Partnership with Anthropic | Anthropic, a client of Amazon, received significant investment from Amazon and is using the AMD Trainium chips to develop AI models. |

| Amazon’s Competitive Strategy | Amazon’s chips aim to reduce dependency on Nvidia while improving efficiency in AI models, leveraging Anthropic’s feedback for development. |

| Future Developments | Upcoming Trainium 3 chip anticipated to be significantly faster and more energy-efficient than Trainium 2, indicating rapid evolution in chip technology. |

Summary

Amazon Trainium chips represent a strategic innovation in the competitive AI landscape. By advancing its in-house chip production through initiatives like Project Rainier and collaborations with companies such as Anthropic, Amazon is positioning itself as a formidable player in the growing demand for AI computational resources. The Trainium 2 chips signify a critical shift in how tech giants are investing in technology that not only enhances their own capabilities but also revolutionizes the infrastructure needed for future AI advancements.